Getting your pages indexed starts with one simple file: your sitemap.

It’s a list of your website’s important URLs that helps search engines find and understand your content faster.

But not all sitemaps work well. An index-friendly sitemap only includes pages that deserve to be indexed—clean, high-quality, and ready to rank.

If your sitemap is messy or outdated, search engines may ignore it or waste time on the wrong pages.

This guide shows you how to build a sitemap that actually helps your SEO.

Still confused about why your site isn’t indexing? This guide on why Google isn’t indexing your pages will help clarify things.

What Is a Sitemap?

A sitemap is a simple file that lists the important pages on your website and tells search engines how your content is organized.

In most cases, this file is an XML sitemap, which is a structured document written in a format search engines can easily read.

It includes your key URLs along with extra details like when a page was last updated, helping search engines understand what to crawl and prioritize.

It’s important to separate this from an HTML sitemap, which is built for users, not search engines.

An HTML sitemap is a visible page that helps visitors navigate your site, while an XML sitemap works behind the scenes as a technical guide for crawlers.

Search engines use XML sitemaps as a roadmap, allowing them to discover pages faster, understand your site structure, and identify new or updated content without relying only on internal links.

This becomes especially useful when your site is new and has few backlinks, when it’s large and difficult to crawl, or when some pages are not easily reachable through normal navigation.

A sitemap helps ensure those pages are still found and considered for indexing.

Why Your Sitemap Might Not Be Index-Friendly

Including Non-Indexable Pages

One of the most common problems is adding pages that search engines are not allowed to index.

This includes URLs with a noindex tag, redirected pages, or error pages like 404s.

When these appear in your sitemap, you send mixed signals, meaning your sitemap says “index this,” while your site says “don’t.”

Search engines don’t trust conflicting instructions, so they may ignore parts of your sitemap or reduce how often they crawl your site.

Too Many Low-Quality or Thin Pages

A sitemap should highlight your best content, not everything you’ve ever published.

If it includes thin pages with little value, duplicate content, or auto-generated pages, it weakens the overall quality signal of your site.

Search engines aim to index useful content. When your sitemap is filled with low-value URLs, it becomes harder for them to identify which pages actually matter.

Outdated or Broken URLs

Sitemaps need to stay current. If they contain URLs that no longer exist, have been moved, or return errors, search engines waste time trying to crawl pages that go nowhere.

This slows down the discovery of your important content and reduces crawl efficiency. Over time, it can also signal poor site maintenance.

Poor Site Structure

Even with a sitemap, your internal structure still matters.

If your pages are hard to reach through normal navigation or are buried too deeply, search engines may treat them as less important.

A sitemap is meant to support your structure, not replace it. When both are misaligned, it creates confusion about which pages should be prioritized.

How These Issues Affect Crawling and Indexing

All of these problems lead to one core issue: wasted crawl budget. Search engines have limited time and resources for each site.

If they spend that time on broken, low-quality, or blocked pages, they may miss or delay indexing your valuable content.

An index-friendly sitemap removes this friction. It guides search engines clearly, helping them focus on the pages that deserve visibility.

Key Elements of an Index-Friendly Sitemap

Only Include Indexable URLs

Your sitemap should list only pages that you want search engines to index.

That means no pages blocked by robots.txt, no URLs with a noindex tag, and no redirects or error pages.

Search engines like Google treat sitemaps as a strong hint, not a command, so if your file includes URLs that shouldn’t be indexed, it creates confusion and reduces trust.

Keeping your sitemap clean helps crawlers focus only on pages that matter.

Use Clean, Canonical URLs

Every URL in your sitemap should be the main version of that page. This is called the canonical URL.

Avoid adding duplicate versions of the same page, such as URLs with tracking parameters or different variations of the same content.

When search engines see multiple versions, they have to decide which one to index, and that can slow things down or dilute ranking signals.

Keep It Updated Automatically

A sitemap should always reflect your current website. When you publish new content, update old pages, or remove URLs, your sitemap should update automatically.

This ensures search engines always have the latest view of your site.

If your sitemap is outdated, crawlers may miss new content or waste time revisiting pages that no longer exist.

Limit Sitemap Size (50,000 URLs / 50MB)

Search engines set clear limits for sitemap files. Each sitemap can contain up to 50,000 URLs and must not exceed 50MB in size (uncompressed).

If your site is larger, you need to split your URLs into multiple sitemaps.

This keeps your files manageable and ensures search engines can process them efficiently without errors.

Proper Use of Sitemap Tags

Sitemaps can include extra tags that give search engines more context.

The <lastmod> tag is the most useful, as it tells crawlers when a page was last updated, helping them decide when to recrawl it.

The <priority> tag is optional and often ignored, as search engines determine importance on their own.

The <changefreq> tag suggests how often a page changes, but it carries little weight today and is mostly treated as a hint rather than a rule.

Use these tags carefully and keep them accurate.

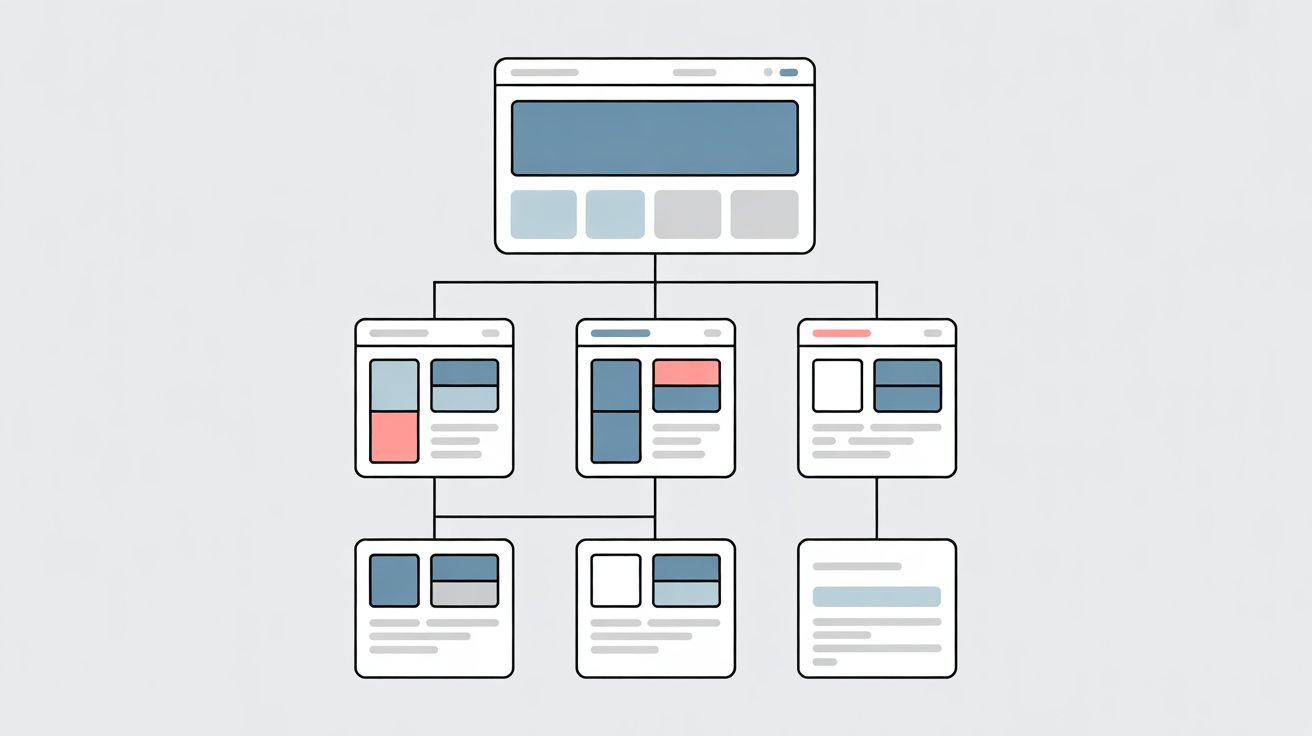

Use Multiple Sitemaps When Needed (Sitemap Index)

If your site has many pages or different types of content, it’s better to organize them into separate sitemaps.

For example, you can have one for blog posts, one for pages, and another for products. These are then grouped in a sitemap index file, which acts as a master list.

This structure helps search engines crawl your site more efficiently and makes it easier for you to manage and monitor different sections.

Step-by-Step: How to Create an Index-Friendly Sitemap

Step 1: Audit Your Website URLs

Start by understanding exactly what exists on your site. You need to separate pages that should be indexed from those that shouldn’t.

Indexable pages are your main content (pages you want to rank), while non-indexable pages include duplicates, filtered URLs, admin pages, or anything blocked with noindex.

At the same time, look for thin or duplicate content, because these weaken your sitemap’s quality signal.

Use tools like site crawlers (e.g., Screaming Frog), SEO platforms, or your CMS to scan your site and extract all URLs.

This gives you a full picture of what search engines might see and helps you take control before building your sitemap.

Step 2: Remove Problematic URLs

Once you have your list, clean it aggressively. Remove any URLs that send the wrong signals, including noindex pages, redirects (3xx), and error pages like 404 or 500.

These should never appear in a sitemap because search engines treat sitemaps as trusted sources, and including bad URLs creates confusion.

Also, remove duplicate URLs and variations of the same page. What remains should be a focused list of high-quality, canonical pages that deserve to be indexed.

This step alone can significantly improve crawl efficiency and prevent wasted crawl budget.

Step 3: Generate Your Sitemap

Now, create the actual sitemap file. If you’re using a CMS like WordPress, plugins can automatically generate and maintain your sitemap as your site grows.

For more control, you can use standalone generators or SEO tools that crawl your site and build a sitemap based on your selected URLs.

Advanced users may create custom XML sitemaps for precise control over structure and updates.

No matter the method, ensure your sitemap only includes clean, canonical URLs and reflects your audit decisions.

Avoid static generators unless you plan to update the file regularly, as outdated sitemaps quickly lose value.

Step 4: Validate Your Sitemap

Before submitting, check that your sitemap works correctly. Look for formatting errors in the XML structure, broken links, or URLs that shouldn’t be there.

Even small issues, like missing tags or incorrect URLs, can prevent search engines from properly reading your sitemap.

Use validation tools or SEO platforms to test your file and confirm it follows protocol standards.

This step ensures your sitemap is not only clean but also technically sound.

Step 5: Submit to Google

Once validated, submit your sitemap through Google Search Console.

Go to the “Sitemaps” section under indexing, enter your sitemap URL (usually /sitemap.xml), and submit it.

After submission, you can track how many pages were discovered and indexed, and check for errors directly in the report.

If your sitemap exceeds the limits of 50,000 URLs or 50MB, you should split it into multiple files and use a sitemap index to organize them.

This final step connects your work to search engines and lets you monitor performance over time.

Best Practices for Keeping Your Sitemap Index-Friendly

Update Automatically When Publishing New Content

Your sitemap should update the moment you publish or change a page. This keeps search engines aware of new content without delays.

Platforms like WordPress and most modern CMS tools can handle this automatically, which reduces the risk of forgetting updates.

Search engines such as Google rely on fresh signals to decide what to crawl next, so an up-to-date sitemap helps your new pages get discovered faster.

Remove Outdated or Deleted Pages

If a page is removed or no longer relevant, it should also be removed from your sitemap.

Keeping dead URLs in your sitemap wastes crawl resources and sends poor quality signals.

Over time, this can slow down how quickly your important pages are revisited.

A clean sitemap reflects a well-maintained site, and that builds trust with search engines.

Prioritize High-Quality Content Only

Your sitemap is not a storage list for every URL because it’s a priority list.

Only include pages that provide real value, such as in-depth articles, key landing pages, or important product pages.

Thin or low-value pages dilute the effectiveness of your sitemap and make it harder for search engines to identify what matters most.

Focusing on quality ensures your strongest pages get the attention they deserve.

Monitor Sitemap Health Regularly

Submitting a sitemap is not a one-time task. You need to check it regularly to catch issues early.

Use tools like Google Search Console to track how many submitted pages are actually indexed and to identify errors.

If there’s a gap between submitted and indexed pages, it often points to quality or technical problems that need fixing.

Align Sitemap with Internal Linking Structure

Your sitemap should support your site structure, not fight against it. If a page is in your sitemap, it should also be easy to find through internal links.

Search engines use both signals together to understand importance and relevance.

When your sitemap and internal linking are aligned, crawling becomes more efficient, and your key pages are more likely to be indexed and ranked correctly.

Advanced Tips

Use Separate Sitemaps for Different Content Types

As your site grows, a single sitemap becomes harder to manage and less effective.

Splitting your sitemap into focused sections, such as blog posts, core pages, products, and media (images or videos), helps search engines understand your site more clearly.

Each sitemap becomes more targeted, which improves crawl efficiency and makes it easier to spot issues within specific sections.

Search engines like Google support sitemap index files, allowing you to group multiple sitemaps under one structure while keeping everything organized.

Optimize Crawl Budget with Smarter Sitemap Structure

Every website has a limited crawl budget, which is the amount of time and resources search engines spend crawling your site.

A well-structured sitemap helps direct that budget toward your most important pages.

By grouping similar content and excluding low-value URLs, you reduce wasted crawling and increase the chances that key pages are discovered and refreshed more often.

This becomes especially important for larger sites where inefficient crawling can delay indexing.

Use <lastmod> Strategically for Faster Recrawling

The <lastmod> tag tells search engines when a page was last updated, but it only works if it’s accurate.

When used correctly, it can signal that content has changed and should be recrawled sooner. This is useful for frequently updated pages like blog posts or product listings.

However, setting the same date for every page or updating it without real changes can cause search engines to ignore the signal altogether.

Precision matters more than frequency here.

Avoid Overloading Google with Unnecessary URLs

More URLs do not mean better SEO. Adding every possible page (especially filtered URLs, tag pages, or duplicates) can overwhelm your sitemap and reduce its effectiveness.

Search engines may spend time crawling pages that don’t need to be indexed, which delays attention to your valuable content.

Keeping your sitemap lean and focused ensures that only the most important pages are prioritized, giving you better control over how your site is crawled and indexed.

Common Sitemap Mistakes to Avoid

Submitting Multiple Conflicting Sitemaps

Using more than one sitemap is fine, but problems start when they don’t match.

If different sitemaps include different versions of the same pages or list URLs inconsistently, search engines receive mixed signals about what should be indexed.

This can slow down crawling or cause the wrong pages to be prioritized.

Platforms like Google recommend keeping your sitemap structure clear and consistent, often through a single sitemap index that organizes all sub-sitemaps properly.

Including Parameter-Based URLs

URLs with parameters such as filters, sorting options, or tracking codes often create multiple versions of the same page.

Adding these to your sitemap can lead to duplicate content issues and wasted crawl resources.

Search engines may spend time crawling variations instead of focusing on your main pages.

Your sitemap should only include clean, static URLs that represent the primary version of each page.

Ignoring Canonical Tags

Canonical tags tell search engines which version of a page is the preferred one.

If your sitemap includes URLs that don’t match their canonical versions, it creates confusion.

For example, if a page points to a different canonical URL but both appear in the sitemap, search engines have to decide which one to trust.

This can weaken indexing signals and delay proper page selection.

Not Updating the Sitemap After Changes

A sitemap is only useful if it reflects your current site. When pages are added, removed, or updated, your sitemap should change too.

Leaving outdated URLs in place can lead search engines to crawl pages that no longer exist or miss new ones entirely.

Over time, this reduces the effectiveness of your sitemap and slows down indexing.

Assuming Submission Guarantees Indexing

Submitting a sitemap does not mean your pages will be indexed. It only helps search engines discover your URLs more efficiently.

Indexing still depends on factors like content quality, internal linking, and overall site trust.

A well-built sitemap improves your chances, but it does not override poor content or technical issues.

How to Check If Your Sitemap Is Working

Use Google Search Console

The easiest way to check your sitemap is through Google Search Console. After submitting your sitemap, go to the “Sitemaps” section to see its status.

Here, you can confirm if it was successfully read and how many URLs were discovered.

This is your starting point. If there are errors, they will show up here, giving you a clear signal that something needs fixing.

Check the Coverage Report

The Coverage (or Indexing) report shows what’s actually happening with your pages. It breaks URLs into categories like indexed, excluded, or error.

This helps you understand whether search engines are accepting your sitemap or ignoring parts of it.

If many pages are excluded, it usually points to issues like low-quality content, duplicates, or technical restrictions.

Compare Indexed vs Submitted Pages

One of the most important checks is comparing how many pages you submitted versus how many are indexed.

If there’s a large gap, it means search engines are choosing not to index some of your pages.

This is normal to a degree, but a big difference signals a problem. It could be content quality, poor internal linking, or including the wrong URLs in your sitemap.

Monitor Crawl Stats

Crawl stats show how often search engines visit your site and how they use their crawl budget.

If your sitemap is working well, you should see consistent crawling activity focused on your important pages.

If crawling is low or inconsistent, it may mean your sitemap isn’t helping guide search engines effectively.

Spot Indexing Issues Early

Regular checks help you catch problems before they grow. Look for sudden drops in indexed pages, spikes in errors, or warnings about excluded URLs.

These signals tell you when something has changed, whether it’s a technical issue or a content problem.

Staying on top of this data keeps you in control and ensures your sitemap continues to support your indexing goals.

Key Takeaways

- A sitemap helps guide search engines, but it does not guarantee indexing

- Quality matters more than quantity when selecting URLs to include

- Clean, updated, and relevant sitemaps perform best over time

- Regular monitoring is essential to catch and fix issues early

If you’ve submitted your sitemap and it’s been a few weeks, but no results yet, read the full indexing issues guide here.

FAQs

A sitemap that only includes high-quality, indexable URLs that you want search engines to crawl and rank.

No, it only helps search engines discover pages more efficiently.

Automatically, whenever new content is published or old content is removed.

Yes, especially for large websites—use a sitemap index file.

No, only include pages you want indexed and ranked.

I’m Alex Crawley, an SEO specialist with 7+ years of hands-on experience helping new websites get indexed on Google. I focus on simplifying technical indexing issues and turning confusing problems into clear, actionable fixes.